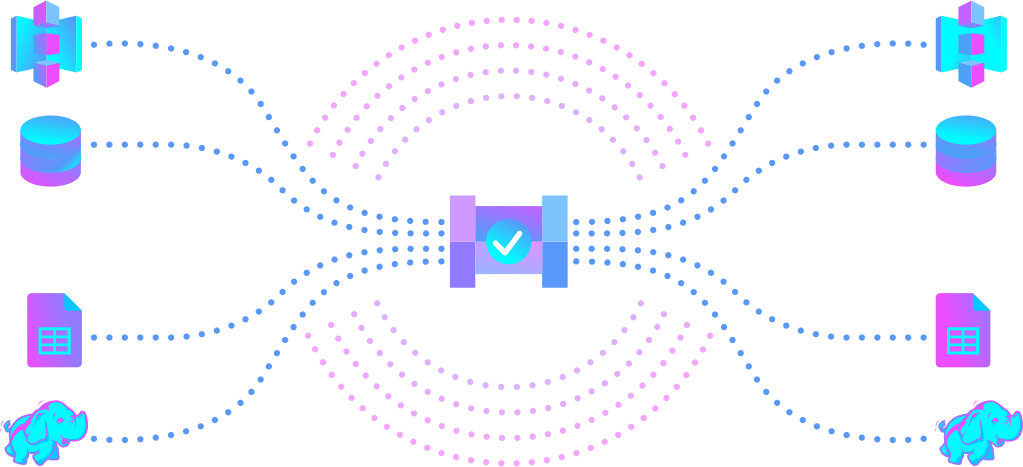

Imagine if we likened a data pipeline to the bustling veins of a city, directing traffic smoothly from one end to the other. In the world of data, pipelines perform a similar function. They are meticulously designed systems that automate the movement and transformation of data from its origin to a destination where it’s primed for analysis or action.

In the workshop of data management, these pipelines act as the assembly lines. Here, data undergoes a transformation process: it’s extracted from its original location, cleaned up, possibly reformatted, and checked for quality before being deposited where it can be most useful.

What’s a Data Pipeline Anyway?

Picture a data pipeline as the assembly line of the data world. It’s a set of automated steps that take data from point A to point B, cleaning it up and shaping it along the way so it’s ready for action. In a factory, products move along a conveyor belt, getting assembled, tested, and packaged. Similarly, in data management, these steps might include pulling data out of its source, changing its format, and making sure it’s accurate and complete.

This automated flow means data is always where it needs to be, in the format that’s most useful. This is super important for machine learning, where the success of algorithms heavily depends on the quality and availability of data.

Powering Up Machine Learning with Data Processing Workflows

Machine learning is all about letting computers learn from data. The better and more relevant the data, the smarter the computer’s decisions and predictions. But getting data ready for machine learning can be a big task.

It might need cleaning up, or we might need to pick out specific features that are important for the algorithms. Data processing workflows are a lifesaver here, handling all these steps automatically and making sure machine learning models have a steady supply of the data they need. Here’s why automated data pipelines are a big deal for machine learning:

- Efficiency and Speed: They take over the grunt work of preparing data, so data scientists can spend more time on the really important stuff, like refining algorithms and making models smarter.

- Scalability: They’re built to handle loads of data. As a company grows and deals with more information, data processing workflows can grow too, keeping everything running smoothly.

- Consistent, Quality Data: They help make sure only the good stuff gets through to your machine learning models, which means you can trust the outcomes a lot more.

- Up-to-the-Minute Insights: Some data processing workflows can process information right as it comes in, helping machine learning models make predictions and decisions based on the very latest data.

Setting Up Successful Data Highways

Building a data pipeline that stands the test of volume and complexity involves several foundational steps:

- Identify Your Unique Data Needs: Start with a blueprint. Determine what your machine learning initiative demands in terms of data variety, volume, and velocity. Understanding these requirements upfront can save you from roadblocks down the line.

- Select the Right Tools for the Journey: Not every tool in your kit will be right for the job. It’s essential to choose technology and software that align with your project’s specific needs and seamlessly integrate with your existing data architecture.

- Guardrails and Governance: Just as cities have traffic laws and regulations, your data pipeline needs rules and protocols to ensure data moves securely and in compliance with legal standards. Establishing robust data governance and security measures is non-negotiable to protect sensitive information and maintain the integrity of your data processes.

- Regular Maintenance and Monitoring: Regularly checking your pipeline’s performance, making necessary adjustments, and updating its components will ensure your data flow remains optimal and efficient.

Wrapping Everything Up

By transforming the chaotic influx of data into structured, analyzed, and ready-to-use information, data processing workflows are the unsung heroes behind machine learning’s success. They ensure that businesses drowning in data can still surface with valuable insights that drive informed decisions, innovation, and streamlined operations.

In a future where data’s role in decision-making and strategic planning is ever-increasing, establishing reliable data pipelines is a crucial step toward a landscape of innovation, efficiency, and sustained growth.